On-Device AI Voice Assistant

Introduction

At CES 2025 Synaptics is showcasing a wide range of impressive AI demos.

Among them is a contextual AI voice assistant, operating entirely on-device, with no cloud dependency or offloading, running on a Synaptics Astra™ Machina SL1680 linux development board.

You can buy the Synaptics Astra SL1680 board and run this open-source demo today:

Find the Python source code on github for you to customize and use.

Some cool capabilities:

- Understanding context-specific queries.

- Responding quickly (as low as 500ms) and accurately without cloud dependence.

- Tool calling peripherals or cloud services possible for extended functionality.

- Leveraging multi-modal systems for vision-based queries.

- Hallucination-free responses, providing Q&A dataset is clean (see Limitations).

The project builds upon the work and contributions of many open source AI projects and individuals, including:

- Speech-to-Text: Moonshine by Useful Sensors Inc., which is 5x faster than Whisper with better accuracy.

- Response Generation: Context-specific Q&A matching using an encoder-only language model

- Text-to-Speech: Piper by the Open Home Foundation.

How it works: visualization

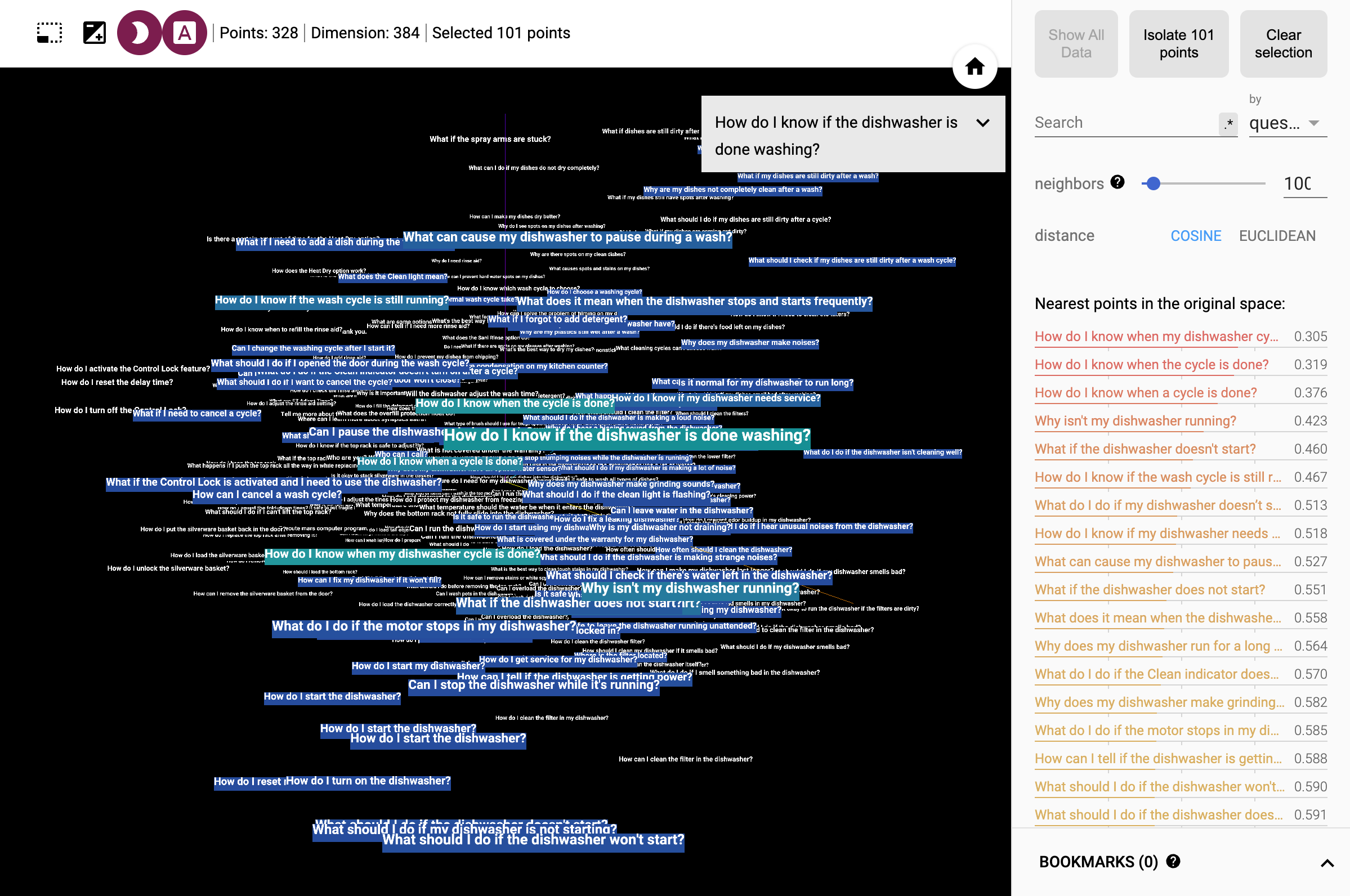

The assistant converts questions to vectors that represent meaning using a sentence transformer. This is similar data format to the which is stored in vector databases for RAG systems (Retrieval Augmented Generation). This enables a semantic search of voice queries - users don't need to phrase exactly.

A great way to get an intuition is to run this interactive visualization of the data. (When you're in the visualization, hit the "A" button to see the labels.)

Example Queries

Below are some common questions and responses that demonstrate how this AI assistant handles queries:

Basic Information

Q - Who are you? What is your name?

A - I am an AI assistant running on Synaptics Astra SL1680.

Q - My dishwasher won't start.

A - If your dishwasher isn't starting, check that it's plugged in, the circuit breaker hasn't tripped, the door is fully closed, and the Power button is activated.

Tool Calling

It's easy to integrate tool calling into the architecture, and actions can be invoked using loose semantic queries. This enables dynamic interaction with:

- Peripherals: Control GPIO or other hardware components.

- Cloud APIs: Call external APIs for extended functionality.

- Vision Models: Leverage NPU-based vision systems for multi-modal queries.

For example, you can enable the assistant to respond to a query by adding it to question_answers.json.

{

"question": "What's the time?",

"answer": "{time}"

},

When matched, the assistant will call {time} by looking it up in tools.json:

{

"token": "{time}",

"command": "date +\"%B %d at %I.%M%p\""

},

This could equally read or write a GPIO, or call a cloud API.

Example Queries with Tool Calling

Q - What's the weather like?

A - The weather in San Jose is partly cloudy, 58°F.

Q - What's the time?

A - The time is 3:45 PM.

On-device AI assistant architecture

The project is deliberately designed to be context specific, using encoder-only language model and pre-generated question-answer pairs to achieve low latency on an embedded linux board. However, semantic matching enables sufficiently natural, private, and responsive interactions about a single topic (e.g. commands or support questions for a specific device) with potential use in home, retail, or industrial applications that benefit from an effective voice UI.

(a) Pre-Generation Architecture

In this architecture, data preparation happens in advance to ensure quick and efficient on-device responses.

- Data Preparation:

- Manual data sources, such as user manuals or FAQs, were pre-processed into text chunks.

- Structured Q&A datasets were then generated using a cloud-based large language model (LLM), with prompt engineering to generate natural user questions based on source materials.

- Semantic Search Index:

- A text embeddings model transforms questions into high-dimensional vectors, enabling semantic search for similar-meaning queries. The encoder-only sentence transformer ensures high performance and avoids hallucination beyond pre-generated data.

- Deployment:

- The generated embeddings and answer text are packaged and loaded onto the device.

(b) On-Device Architecture

This architecture enables seamless interaction between the user and the assistant without any external dependencies. Notably, it leverages an encoder-only sentence transformer for reasoning and response generation, offering a novel approach that ensures high performance while eliminating the possibility of hallucination beyond pre-generated data.

- Voice Input Pipeline:

- User speech is processed via Voice Activity Detection (VAD) to identify active voice input.

- Semantic Search:

- Transcribed text undergoes semantic search to match the closest pre-generated Q&A pair using the embeddings using cosine similarity.

- Response Delivery:

- Matched responses are converted to audio using text-to-speech (TTS) models.

- Tool Querying:

- Peripherals, such as GPIO or other hardware components, can be controlled, and APIs can be invoked based on semantic matches. This enables dynamic interactions and extends assistant capabilities.

- Multi-Model Systems:

- Tool calling can include vision models running on the NPU for object recognition or environmental understanding. For example, the query "What can I see?" could invoke a vision model, responding with: "I can see a {call_vision}" for enhanced multi-modal capabilities.

On-Device Voice Pipeline

The following diagram represents the flow of data within the on-device voice assistant:

User Speech --> [VAD] --> [STT] --> [Embeddings] -----------> [Semantic search]

|

|

v

[Look up Answer]

|

|

[Run tool e.g. GPIO, Cloud APIs] <-----------yes---------[Contains Tool Call token?]

| |

| |

| v

--------Insert tool response into answer------------------> [TTS]

- Voice Activity Detection (VAD): Detects when a user begins speaking.

- Speech-to-Text (STT): Transcribes user speech into text using Moonshine.

- Embeddings: Converts user question into an embedding

- Semantic search: Perform cosine similarity search against pre-generated question embeddings.

- Text-to-Speech (TTS): Converts retrieved answers into natural-sounding audio output.

- Tool Querying: Allows interaction with peripherals, vision models, or external APIs for additional functionalities.

Future Directions

The potential for on-device AI is vast, with applications extending beyond home appliances to consumer, commercial, medical, and industrial devices. The integration of vision models and tool calling capabilities further enhances the functionality of these systems.

As the technology evolves, we anticipate more sophisticated interactions and improved performance, driven by advancements in small language models and efficient AI frameworks. Knowledge graphs and customization of the sentence transformer may yield better accuracy at smaller model size, beyond this, an agentic approach using fine tuned models holds promise.

Above is a prototype multi-modal implementation running a vision model on the NPU in parallel.